What security threats does AI pose?

AI introduces new cybersecurity threats by enabling faster, more adaptive attacks. Common risks include AI-generated phishing, deepfakes, automated vulnerability exploitation, data poisoning, adaptive malware, and uncontrolled internal use of AI tools. These threats are harder to detect because they evolve in real time and exploit trust, identity, and automation

Introduction

AI did not quietly enter the enterprise. It showed up everywhere at once.

Security teams are using it for alert triage and anomaly detection. Developers rely on it for code. Employees use it to summarize meetings, rewrite emails, and analyze documents. Most of this happens outside of formal security review.

In 2024, spending on AI-native applications increased by 75%, with organizations averaging nearly $400,000 in annual spend. According to McKinsey, almost half of companies now use AI across multiple business functions.

From a cybersecurity perspective, this matters for one reason. AI systems create new paths for attackers, often faster than security programs adapt.

AI cybersecurity is no longer a future concern. The security threats are already operational.

Why AI Creates a Different Class of Cybersecurity Threats

Most legacy security controls were built around predictability.

Malware reused infrastructure. Phishing relied on volume. Attack techniques followed patterns that could be cataloged, blocked, and tuned over time.

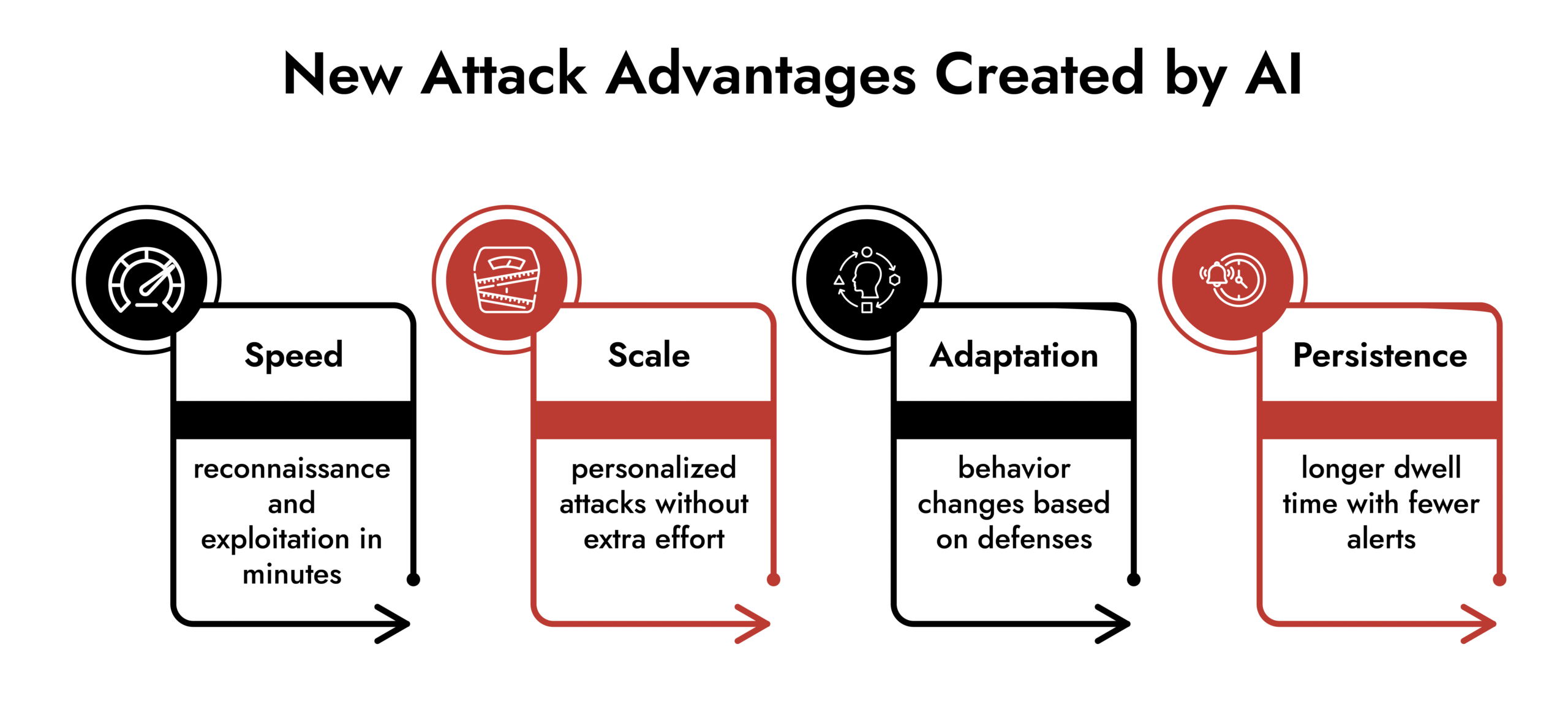

AI cybersecurity changes that dynamic.

AI-powered cyberattacks adjust as they run. They test defenses, learn which controls trigger alerts, and change behavior midstream. Instead of static indicators, security teams face activity that evolves continuously.

This forces a shift in how risk is managed. The problem is no longer just detection. It is whether security teams can trust the systems making decisions on their behalf.

The Most Pressing AI Cybersecurity Threats Today

AI-Generated Phishing That Looks Legitimate

Phishing no longer looks like phishing.

Attackers use AI to produce messages that match internal language, business context, and writing style. Grammatical errors are gone. Generic templates are gone. Even follow-up replies sound natural.

These attacks slip past email filters and catch users off guard, including technically savvy staff. Once credentials are stolen, lateral movement often begins quietly.

This is one of the most common AI cybersecurity threats seen in real-world incidents.

Deepfakes and Identity Abuse

Voice and video impersonation are no longer novelty attacks.

Security teams are seeing AI-generated audio used in payment fraud, access requests, and executive impersonation schemes. Video deepfakes add credibility to social engineering campaigns and weaken trust in identity-based controls.

Any security process that relies on “this looks or sounds real” is now exposed.

For machine learning security systems that use biometrics, this means that assumptions must change.

Automated Vulnerability Discovery

Attackers increasingly use AI to automate reconnaissance.

Instead of manually probing systems, they scan continuously for weak configurations, exposed services, and overlooked dependencies. AI cybersecurity helps prioritize which weaknesses are most likely to succeed and generates exploit paths faster than human operators could.

This compresses the timeline between exposure and compromise. Traditional patch cycles struggle to keep up.

AI-driven threat detection becomes necessary simply to match attacker speed.

Data Poisoning and Model Manipulation

Some AI cybersecurity threats do not look like attacks at first.

By manipulating training data or feedback loops, attackers can degrade model performance over time. Alerts become inconsistent. Detection accuracy drops. Analysts begin to distrust automated outputs.

The damage is gradual, which makes it harder to spot and harder to attribute. By the time teams notice, confidence in the system may already be compromised.

Prompt Injection and Model Manipulation

Prompt injection is one of the most direct ways attackers manipulate AI systems.

Instead of exploiting software vulnerabilities, attackers exploit instructions. They craft inputs designed to override safeguards, extract sensitive information, or alter how the AI behaves.

For example, an attacker may trick an AI assistant into revealing confidential data, executing unintended actions, or ignoring its own security rules. Because the system interprets the malicious input as valid instructions, traditional security controls may not detect the abuse.

This risk becomes more serious when AI tools are connected to internal systems, knowledge bases, or automation workflows. A successful prompt injection can turn a trusted system into an access point.

Unlike conventional exploits, prompt injection targets decision logic itself.

AI Agents with Excessive Access and Privileges

AI agents introduce a different level of operational risk.

Many AI agents are granted direct access to files, systems, applications, or administrative functions to perform useful tasks. They can read documents, execute commands, interact with APIs, and automate workflows across environments.

If an attacker compromises the agent, they inherit the same access.

In practice, this means:

- Access to sensitive internal data

- Ability to execute actions on systems

- Visibility into proprietary workflows

- Potential control over connected tools and services

The risk is amplified because AI agents are often deployed quickly, without the same identity monitoring, logging, and access controls applied to human users.

From a security standpoint, an AI agent should be treated as a privileged identity, not just a tool.

Without proper controls, it becomes an unmonitored access point inside the environment.

Shadow AI Inside the Organization

One of the most overlooked risks comes from internal use.

Security analysts paste logs into public AI tools. Developers connect AI APIs to internal environments. Teams experiment without realizing what data is leaving the organization.

This creates blind spots that traditional security tools do not monitor. Sensitive telemetry, credentials, or proprietary logic may be exposed without triggering alarms.

From a cybersecurity standpoint, shadow AI introduces unmanaged third-party risk at scale.

Adaptive Malware Using Machine Learning

Malware has become more evasive.

Some strains now adjust behavior based on the environment they land in. They delay execution, randomize indicators, and probe defenses before acting. Signature-based detection struggles against this approach.

These machine learning security threats are designed to stay quiet until conditions are favorable, increasing dwell time and impact.

Unmask GenAI Threats — Get Ahead of the Curve

How Attackers Are Using AI in Practice

Attackers use AI to reduce effort, not to innovate.

They automate reconnaissance. They generate better social engineering content. They test evasion techniques repeatedly until something works. This lowers the skill barrier for sophisticated attacks.

As a result, AI cybersecurity threats are no longer limited to advanced threat actors. They show up in routine intrusion cases across industries.

How Cybersecurity Teams Can Reduce AI-Related Risk

Avoiding AI-related threats does not mean avoiding AI itself. It means controlling how it is introduced and used.

Treat AI Systems as High-Risk Assets

If an AI system influences security decisions, it should be threat-modeled like any other critical component.

That includes understanding:

- What data flows into the model

- Where outputs are used

- How updates are applied

- Who owns operational risk

AI that operates without clear ownership becomes a liability.

Protect Against Prompt Injection

Organizations should assume prompt injection attempts will occur.

Mitigation includes:

- Restricting AI access to sensitive systems unless required

- Validating inputs and outputs

- Monitoring AI interactions for abnormal behavior

- Limiting automated execution of AI-generated actions

AI output should not automatically trigger privileged actions without verification.

Update Cybersecurity Awareness for AI Abuse

Security awareness programs need to be changed.

Employees should be trained to question urgency, authority, and realism. AI-generated messages often feel more convincing, not less. Verification matters more than intuition.

Cybersecurity awareness now focuses on trust validation, not just malware avoidance.

Use AI Where It Adds Defensive Value

AI investments should prioritize defense, not convenience.

Security teams benefit most from:

- Alert correlation

- Anomaly detection across large data sets

- Faster triage during incidents

AI cyber threat detection helps analysts focus on what actually matters instead of chasing noise.

Protect Data and Monitor Model Behavior

Models should not be trusted indefinitely.

Training data must be protected. Model performance should be monitored continuously. Unexpected changes in output or accuracy deserve investigation.

Machine learning security is an ongoing process, not a one-time deployment.

Maintain Visibility into AI Usage

Security teams need visibility into which AI tools are used and how data moves through them.

Without this, organizations cannot detect misuse, data leakage, or exposure early enough to respond.

Keep Humans Involved in High-Impact Decisions

Automation speeds things up. It does not replace accountability.

High-risk actions should involve human review. Explainability matters, especially when AI outputs influence response decisions.

AI should support analysts, not quietly override them.

What This Means for the Cybersecurity Industry

AI is changing how attacks are built and how defenses must operate.

Security teams that rely on static controls and periodic reviews will struggle. Teams that combine AI-driven threat detection with visibility, governance, and human judgment will be better positioned to adapt.

AI itself is not a threat. Uncontrolled use is.

Frequently Asked Questions

1. Why is AI a threat to security?

Because it enables faster, more adaptive attacks that bypass traditional detection methods and exploit trust-based systems.

2. What are the main security threats posed by AI?

They include AI-generated phishing, deepfakes, automated exploitation, data poisoning, adaptive malware, and uncontrolled internal AI usage.

3. How are cybercriminals using AI in attacks?

They use it to automate reconnaissance, generate realistic social engineering content, and test evasion techniques at scale.

4. How can organizations reduce AI-related security risks?

By treating AI as critical infrastructure, strengthening awareness, protecting data and models, monitoring usage, and pairing automation with human oversight.

5. Are generative AI tools safe for enterprise use?

They can be, but only with clear policies, visibility into data flows, and continuous monitoring.

Uncover the Dual Nature of AI in Cybersecurity

-Real-world AI success in threat detection

-Common AI misconceptions in cybersecurity

-Risks \& limitations of AI-based tools

-Responsible AI adoption strategies